Brain waves – or, oscillatory brain activity – are thought to play an important role in how different areas of the brain communicate. They’re also altered in many diseases.

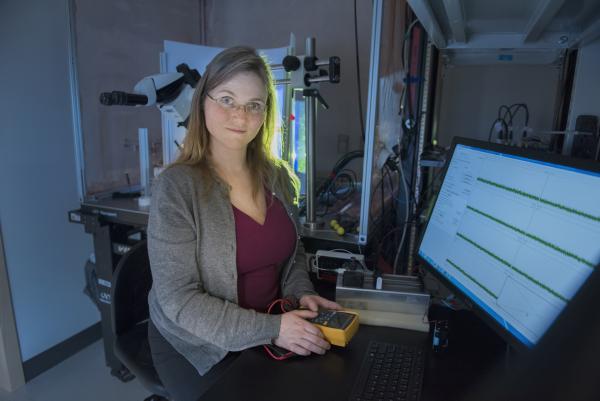

“We think these brain waves are like walkie talkies tuned to the same frequency,” says Annabelle Singer, assistant professor in the Wallace H. Coulter Department of Biomedical Engineering at Georgia Tech and Emory University, who looks closely at brain waves in her research, which is focused on understanding how neural activity produces memories and protects brain health.

“Interestingly, one brain region can switch frequencies in order to control what other brain regions it talks to,” says Singer, who also is a researcher in the Petit Institute for Bioengineering and Bioscience at Tech.

She notes that current methods to assess brain waves average these over long time scales, obscuring rapid and dynamic changes in communication between different brain regions. But through combining signal processing and multiple machine learning methods, her lab has developed a new and better approach.

The Singer team presented its research recently with publication of a new paper in the journal eLife. The paper, entitled, “Sub-second Dynamics of Theta-Gamma Coupling in Hippocampal CA1,” details a new approach to investigate oscillatory brain dynamics broadly without having to average over long periods of time. Instead, says Singer, “it reveals sub-second changes in how brain regions communicate with each other.”

She adds, “The electric activity we record in the brain comes in different flavors, characterized by the frequency at which the brain wave is oscillating.” Characterizing these “flavors” is complicated by the fact that different oscillations are often coupled. For example, gamma oscillations may be nested within theta in the hippocampus. Changes in coupling are thought to reflect distinct brain states.

The Singer team describe a new way to separate single oscillation cycles into distinct states based on frequency and phase coupling. Using their new method, the researchers identified four theta-gamma coupling states in animal models. “There are four flavors – or theta-gamma coupling states – that we have identified, but there are many more kinds of brain waves,” Singer says. “What’s exciting is, we can track them with millisecond precision.”

So much in the realm of brain research involves not only listening to the noise that neurons are making and recording these micro-conversations, but understanding the language, and why it makes sense.

Previous research by the Singer lab and colleagues at the Massachusetts Institute of Technology (MIT) demonstrated that a combination of light and sound can improve cognitive and memory impairments similar to those seen in Alzheimer’s patients, a noninvasive treatment that induces gamma oscillations (brain waves that oscillate around 40 times per second) and reduces the number of amyloid plaques found in the brain. In Alzheimer patients, abnormal levels of amyloid (a naturally occurring protein) form plaques that gather between neurons and disrupt cell function.

This recent paper, Singer says, “is basically all about gaining a better understanding of naturally occurring gamma in the brain.” Led by first author Lu Zhang’s elegant solution combining signal processing and machine learning, the latest research represents another clarifying step on the road to a better understanding of brain function and treatment for Alzheimer’s disease.

In addition to Zhang and Singer, the other authors were Chris Rozell (Petit Institute researcher and a professor with joint appointments in the Coulter Department and the Electrical and Computer Engineering at Georgia Tech, where he directs the Sensory Information Processing Lab) and John Lee (former postdoctoral researcher in Rozell’s lab, now with DSO Laboratories in Singapore).

Media Contact

Jerry Grillo

Communications Officer II

Parker H. Petit Institute for

Bioengineering and Bioscience

Latest BME News

Jo honored for his impact on science and mentorship

The department rises to the top in biomedical engineering programs for undergraduate education.

Commercialization program in Coulter BME announces project teams who will receive support to get their research to market.

Courses in the Wallace H. Coulter Department of Biomedical Engineering are being reformatted to incorporate AI and machine learning so students are prepared for a data-driven biotech sector.

Influenced by her mother's journey in engineering, Sriya Surapaneni hopes to inspire other young women in the field.

Coulter BME Professor Earns Tenure, Eyes Future of Innovation in Health and Medicine

The grant will fund the development of cutting-edge technology that could detect colorectal cancer through a simple breath test

The surgical support device landed Coulter BME its 4th consecutive win for the College of Engineering competition.